短時間フーリエ変換、MFCCを利用する

import numpy as np

import librosa

import librosa.display

import os

import matplotlib.pyplot as plt

from sklearn.model_selection import train_test_split

from sklearn import svm

from scipy import fftpack

# 音声データを読み込む

speakers = {'kirishima' : 0, 'suzutsuki' : 1, 'belevskaya' : 2}

# 特徴量を返す

def get_feat(file_name):

a, sr = librosa.load(file_name)

y = np.abs(librosa.stft(a))

plt.figure(figsize=(10, 4))

librosa.display.specshow(librosa.amplitude_to_db(y, ref=np.max), y_axis='log', x_axis='time', sr=sr)

plt.colorbar(format='%+2.0fdB')

plt.tight_layout()

return y

# 特徴量と分類のラベル済みのラベルの組を返す

def get_data(dir_name):

data_X = []

data_y = []

for file_name in sorted(os.listdir(path=dir_name)):

print("read: {}".format(file_name))

speaker = file_name[0:file_name.index('_')]

data_X.append(get_feat(os.path.join(dir_name, file_name)))

data_y.append((speakers[speaker], file_name))

return (np.array(data_X), np.array(data_y))

# data_X, data_y = get_data('voiceset')

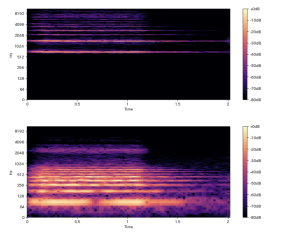

get_feat('sample/hi.wav')

get_feat('sample/lo.wav')

speakers = {'kirishima' : 0, 'suzutsuki' : 1, 'belevskaya' : 2}

# 特徴量を返す

def get_feat(file_name):

a, sr = librosa.load(file_name)

y = np.abs(librosa.stft(a))

# plt.figure(figsize=(10, 4))

# librosa.display.specshow(librosa.amplitude_to_db(y, ref=np.max), y_axis='log', x_axis='time', sr=sr)

# plt.colorbar(format='%+2.0fdB')

# plt.tight_layout()

return y

# 特徴量と分類のラベル済みのラベルの組を返す

def get_data(dir_name):

data_X = []

data_y = []

for file_name in sorted(os.listdir(path=dir_name)):

print("read: {}".format(file_name))

speaker = file_name[0:file_name.index('_')]

data_X.append(get_feat(os.path.join(dir_name, file_name)))

data_y.append((speakers[speaker], file_name))

return (data_X, data_y)

data_X, data_y = get_data('voiceset')

train_X, test_X, train_y, test_y = train_test_split(data_X, data_y, random_state=11813)

print("{} -> {}, {}".format(len(data_X), len(train_X), len(test_X)))

def predict(X):

result = clf.predict(X.T)

return np.argmax(np.bincount(result))

ok_count = 0

for X, y in zip(test_X, test_y):

actual = predict(X)

expected = y[0]

file_name = y[1]

ok_count += 1 if actual == expected else 0

result = 'o' if actual == expected else 'x'

print("{} file: {}, actual: {}, expected: {}".format(result, file_name, actual, expected))

print("{}/{}".format(ok_count, len(test_X)))

MFCC

def get_feat(file_name):

a, sr = librosa.load(file_name)

y = librosa.feature.mfcc(y=a, sr=sr)

# plt.figure(figsize=(10, 4))

# librosa.display.specshow(librosa.amplitude_to_db(y, ref=np.max), y_axis='log', x_axis='time', sr=sr)

# plt.colorbar(format='%+2.0fdB')

# plt.tight_layout()

return y

o file: suzutsuki_b06.wav, actual: 1, expected: 1

o file: kirishima_04_su.wav, actual: 0, expected: 0

o file: kirishima_c01.wav, actual: 0, expected: 0

o file: belevskaya_b04.wav, actual: 2, expected: 2

o file: belevskaya_b14.wav, actual: 2, expected: 2

o file: kirishima_b04.wav, actual: 0, expected: 0

o file: suzutsuki_b08.wav, actual: 1, expected: 1

o file: belevskaya_b07.wav, actual: 2, expected: 2

o file: suzutsuki_b03.wav, actual: 1, expected: 1

o file: belevskaya_b10.wav, actual: 2, expected: 2

o file: kirishima_b01.wav, actual: 0, expected: 0

o file: belevskaya_07_su.wav, actual: 2, expected: 2

12/12

MFCC凄すぎんだろこれ